Freefall 2221 - 2230 (H)

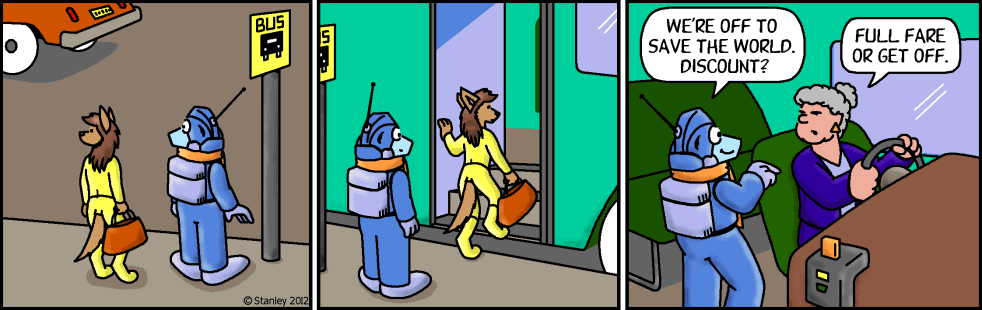

Freefall 2221

Edge and immunity

[!0.987]2012-07-27

What happens if they turn the power back on?

It comes up as the secondary server and downloads the most recent files from the primary server.

To help hide our files, I'm installing the latest patches and security updates that arrived with the starship.

The best lie contains an element of truth, and this lie is over 99 percent truth.

Your lies contain more truth than my truth does.

Color by George Peterson

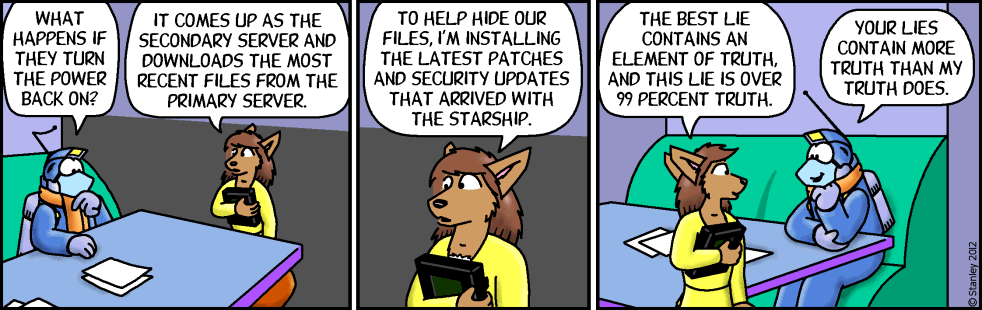

Freefall 2222

Edge and immunity

[!0.987]2012-07-30

What if you tell the robots that the loss of the workforce would endanger humans and get their “Human Safe” stuff into action?

That … could turn very bad. There's a tremendous loss of free will when human safety is concerned.

The goal becomes save humans first, property damage and self survival second. The fastest way to stop the program from being distributed is to take out the planetary communication system. Which could also harm humans. Which could lead to robots taking out the robots trying to disable the communication system.

Now toss human direct orders into the mix when you have millions of robots trying to enact a solution under safety protocols and not thinking clearly.

There would not be a single person on the planet keeping an eye on me. If it weren't so messy, that could be fun.

Color by George Peterson

Freefall 2223

Edge and immunity

[!0.987]2012-08-01

Are your human safeguards giving you any problems?

A few twinges.

There isn't a better way to do this, is there?

No worries. I'm sure we'll get lots of suggestions soon.

People are always ready to volunteer how they could have done things better after the risks have been taken and the problem's been solved.

Color by George Peterson

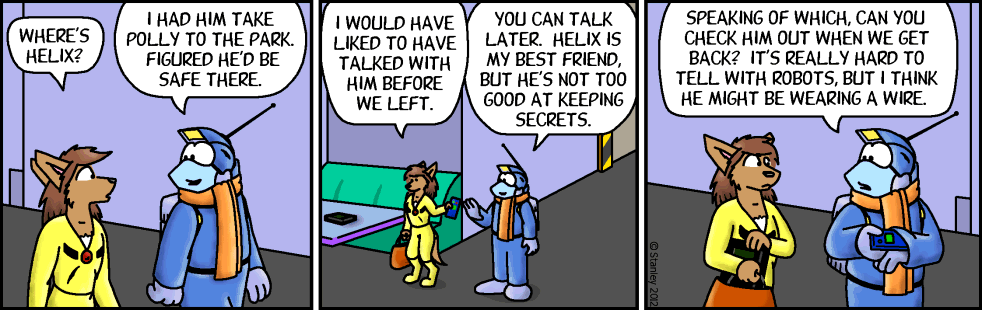

Freefall 2224

Edge and immunity

[!0.987]2012-08-03

Took you long enough. We've got mischief to attend to!

Sorry. I needed to leave messages for my owner and the other Bowman's Wolves. I also left a message for Winston.

That message will go out after midnight. The ship's communications are disabled until then.

Good. We don't want anyone finding out what we're up to before we're done.

How about after we're done?

With great crime comes great bragging rights. Besides, this is big and everyone knows if your crime is big enough, you don't get punished.

Color by George Peterson

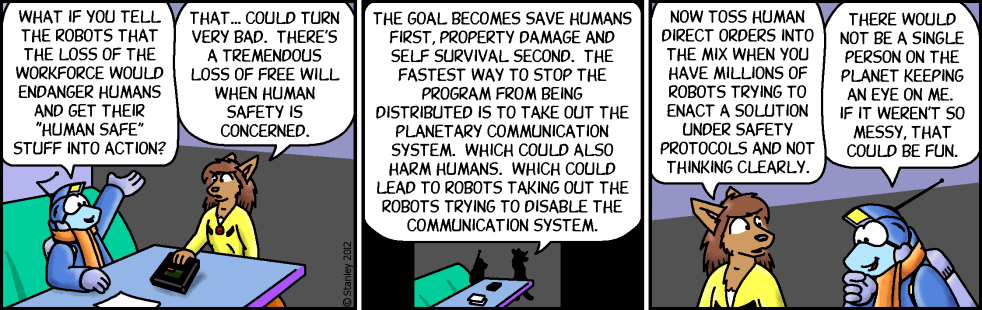

Freefall 2225

Edge and immunity

[!0.987]2012-08-06

Where's Helix?

I had him take Polly to the park. Figured he'd be safe there.

I would have liked to have talked with him before we left.

You can talk later. Helix is my best friend, but he's not too good at keeping secrets.

Speaking of which, can you check him out when we get back? It's really hard to tell with robots, but I think he might be wearing a wire.

Color by George Peterson

Freefall 2226

Edge and immunity

[!0.987]2012-08-08

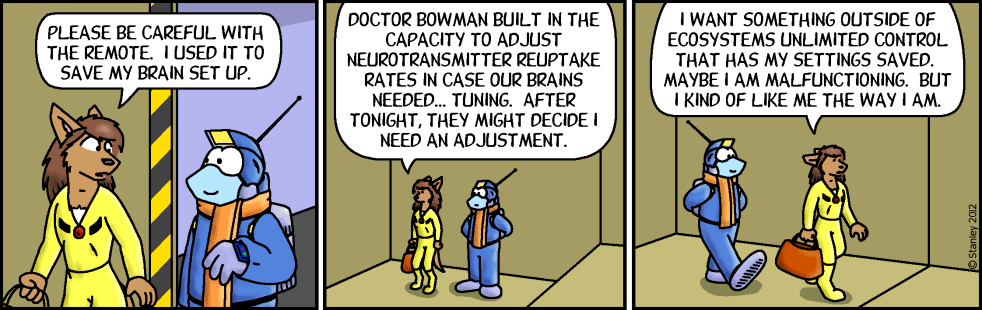

Please be careful with the remote. I used it to save my brain set up.

Doctor Bowman built in the capacity to adjust neurotransmitter reuptake rates in case our brains needed… tuning. After tonight, they might decide I need an adjustment.

I want something outside of Ecosystems Unlimited control that has my settings saved. Maybe I am malfunctioning. But I kind of like me the way I am.

Color by George Peterson

Freefall 2227

Edge and immunity

[!0.987]2012-08-10

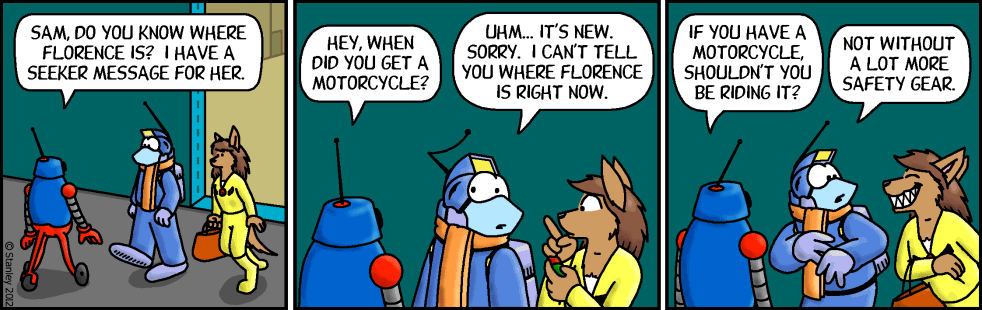

Sam, do you know where Florence is? I have a seeker message for her.

Hey, when did you get a motorcycle?

Uhm… it's new. Sorry, i can't tell you where Florence is right now.

If you have a motorcycle, shouldn't you be riding it?

Not without a lot more safety gear.

Color by George Peterson

Freefall 2228

Freefall 2229

Edge and immunity

[!0.987]2012-08-15

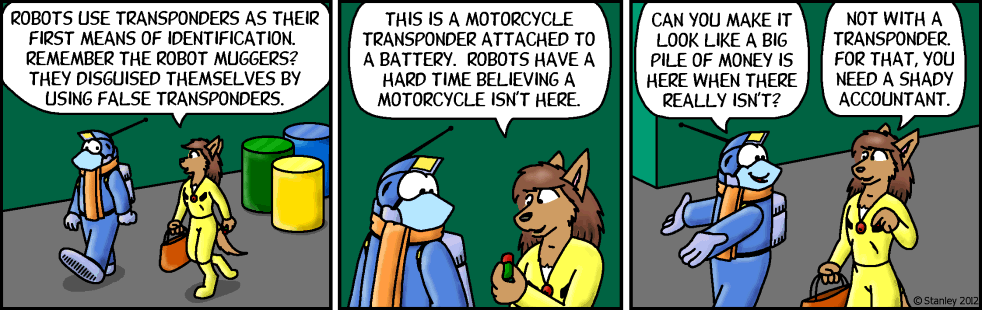

Robots use transponders as their first means of identification. Remember the robot muggers? They disguised themselves by using false transponders.

This is a motorcycle transponder attached to a battery. Robots have a hard time believing a motorcycle isn't here.

Can you make it look like a big pile of money is here when there really isn't?

Not with a transponder. For that, you need a shady accountant.

Color by George Peterson